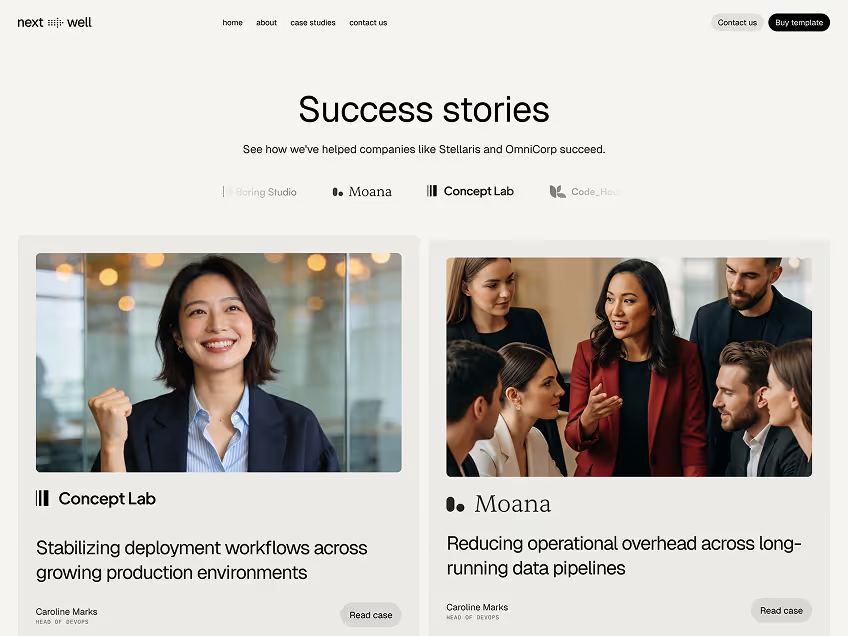

Improving execution reliability for multi-team automation workflows

Improving execution reliability for multi-team automation workflows

This case study shows how a growing product team restructured execution workflows to improve reliability, reduce manual intervention, and maintain speed as complexity increased. The focus wasn’t adding more tools, but designing a system that could run predictably under real conditions.

Context

As the product and team grew, execution became harder to manage. More deployments, more background jobs, and more automation meant higher load and more points of failure. What used to work informally started breaking down, especially during peak usage and releases.

The team needed a way to keep execution moving without relying on constant monitoring or ad-hoc fixes.

The approach

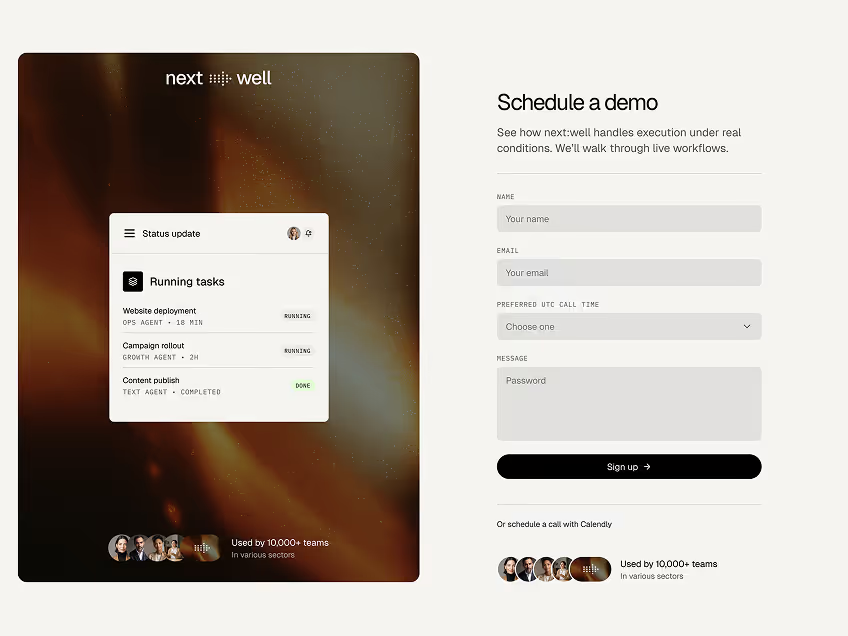

Execution was reorganized into agent-driven tasks with clear boundaries. Work was picked up based on capacity, failures were isolated, and retries followed defined rules instead of manual intervention.

Visibility was built into the workflow, so every run could be inspected without additional tooling. The system was designed to adapt to load rather than pushing everything at full speed.

“The new system has revolutionized our data processing workflows.”

The result

Once the new execution flow was in place, the system became noticeably quieter. Tasks completed consistently, failures were contained, and teams no longer needed to monitor every run.

Engineers could focus on building and improving workflows instead of managing them day to day.

The biggest shift wasn’t technical — it was operational. Execution became something the team trusted instead of something they worried about. The system handled repetition and scale, while humans stayed focused on direction and decisions.