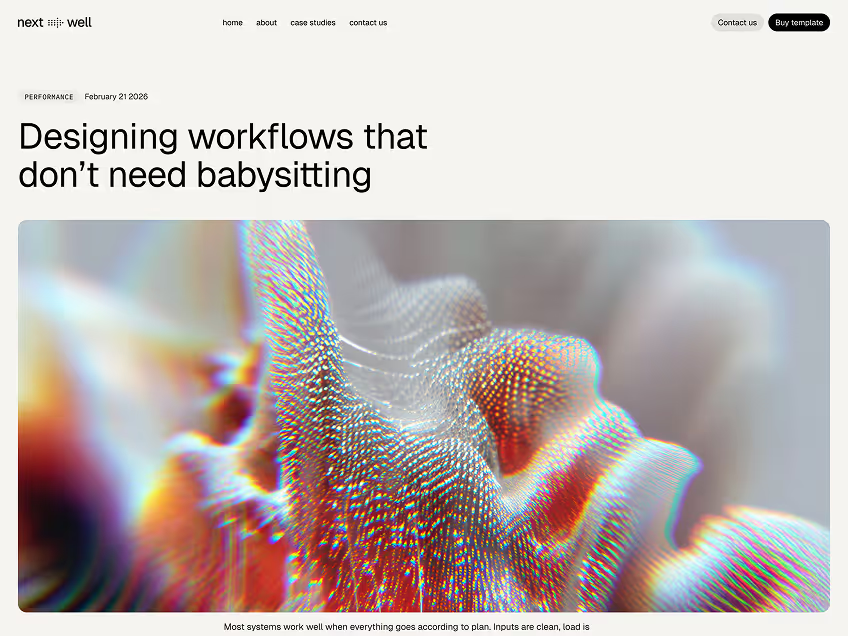

Designing workflows that don’t need babysitting

Most systems work well when everything goes according to plan. Inputs are clean, load is predictable, and failures are rare. In those conditions, almost any workflow looks reliable. The real test starts later, when usage grows, priorities shift, and execution becomes less controlled. That’s usually when teams discover that the hardest part of building systems isn’t speed, but consistency.

As systems evolve, execution tends to accumulate hidden complexity

Small manual steps are added to “just make things work.” Temporary fixes become permanent. Monitoring grows louder while clarity decreases. Over time, teams spend more energy reacting to execution than improving it. What once felt manageable begins to feel fragile. This is where many teams make the wrong tradeoff. They chase more automation, more tooling, or more process, hoping that volume will compensate for structure. But execution doesn’t fail because there isn’t enough automation. It fails because responsibility, visibility, and recovery are poorly defined. Without clear boundaries, even well-intentioned systems become unpredictable.

Predictability doesn’t mean rigidity

It means that when something runs, you can reasonably expect how it will behave. You know where it starts, how it progresses, and what happens if something goes wrong. Predictable systems make failure easier to reason about, not more painful to experience. They reduce the cognitive load required to operate at scale. One of the most overlooked aspects of execution is how systems handle partial failure. In real environments, things rarely fail completely. A task might time out while others continue running. A dependency might degrade without going fully offline. Systems that aren’t designed for this middle ground often respond poorly, either by stopping everything or by failing silently. Both outcomes erode trust.

Designing for recovery changes how systems feel to operate. When failures are isolated and visible, teams stop treating them as emergencies. They become signals instead of surprises. This shift alone can dramatically reduce operational stress, even if nothing else changes.

Another quiet source of instability is uneven load. Systems are often tested at peak or average conditions, but real usage fluctuates constantly. When execution runs at full speed regardless of context, small spikes can create outsized problems. Pacing execution based on capacity allows systems to absorb change instead of amplifying it.

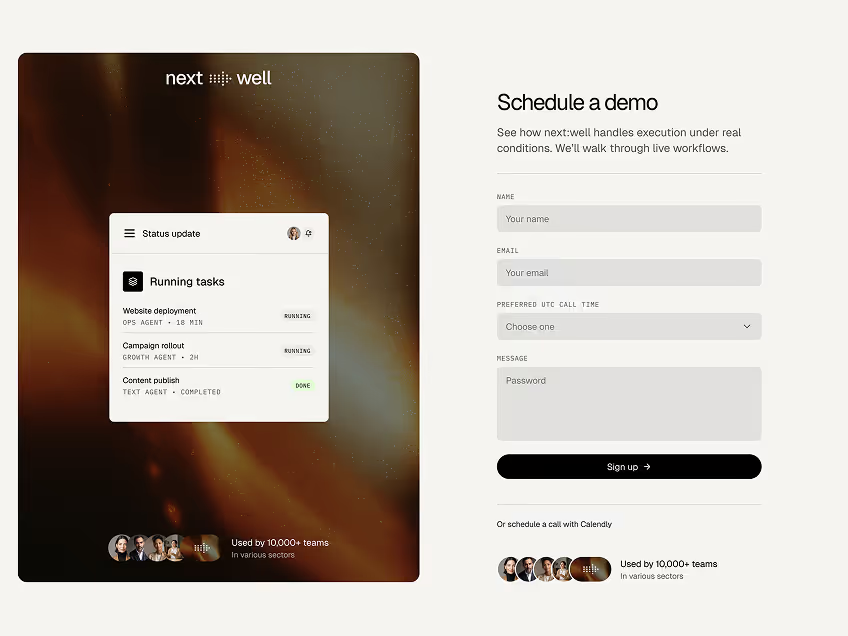

Over time, teams that invest in execution design notice something subtle

The system gets quieter. Fewer manual checks are needed. Fewer alerts require action. Work moves forward without constant supervision. This isn’t because the system became perfect, but because it became understandable.

Good execution systems don’t draw attention to themselves. They don’t rely on heroics. They don’t require someone to be “on top of things” at all times. They create space for teams to focus on decisions, improvements, and direction instead of coordination.